Architectures of Categorization: From Software Stereotypes to Social Power

"We do not see the world as it is; we see it as we are prepared to see it."

Abstract

In software engineering, a stereotype is a controlled extension of meaning: a declarative role that structures architecture and guides behavior in predictable ways. In psychology and sociology, a stereotype is something far more volatile — a probabilistic, automatic, affect-laden mechanism that shapes perception, identity, and social hierarchy.

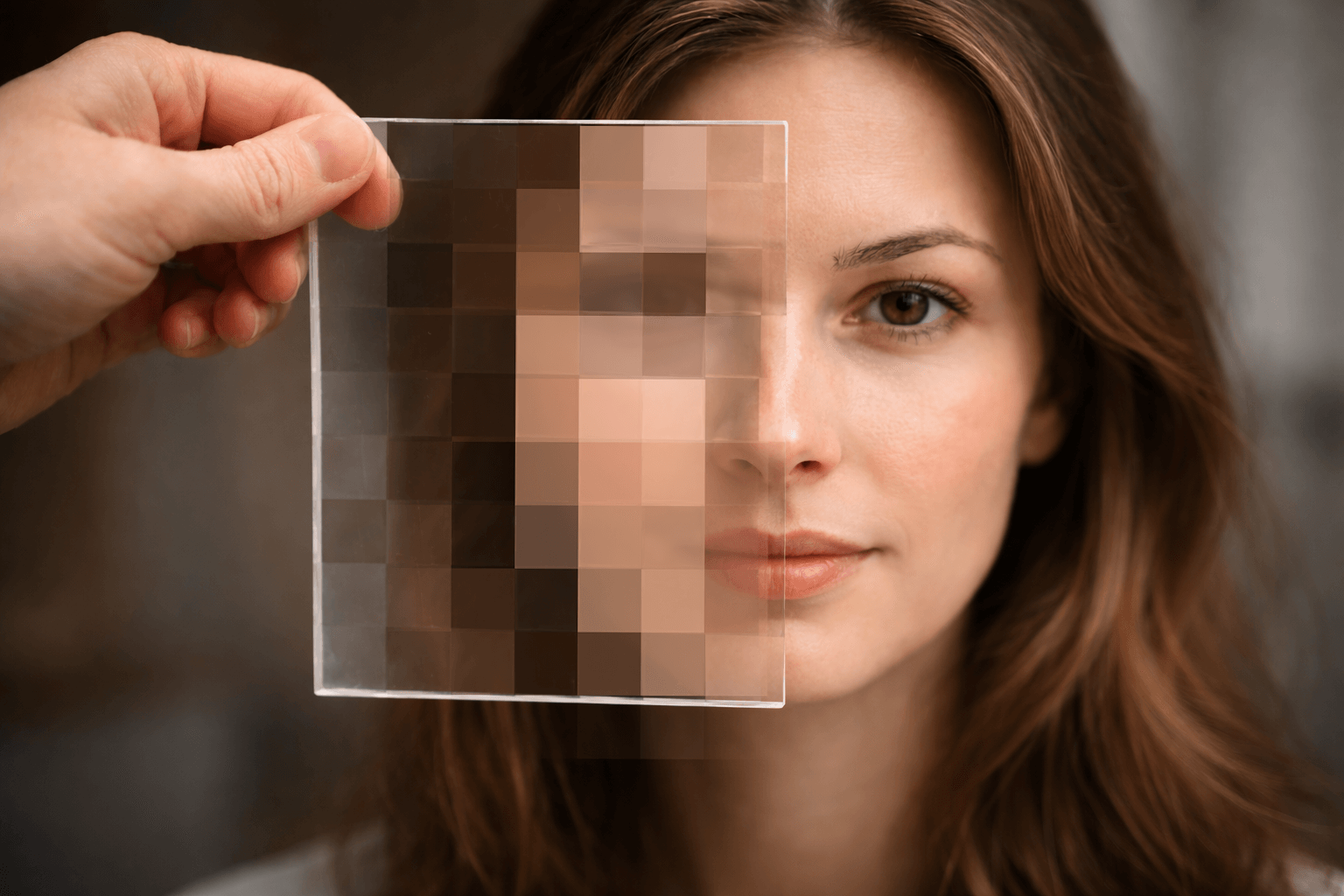

This paper argues that computer science has captured only the structural shell of stereotyping while overlooking its cognitive engine. By examining concrete implementations in UML, frameworks, design patterns, meta-programming, and knowledge-based systems, and contrasting them with foundational research in social psychology, we show that the true divergence lies not in terminology but in ontology. Software stereotypes are explicit tools. Human stereotypes are implicit forces.

The comparison reveals a deeper philosophical tension: classification in code is architectural; classification in society is performative and often political. As computational systems increasingly simulate human inference through probabilistic models and machine learning, the boundary between structural labeling and cognitive stereotyping narrows. The stakes are therefore no longer theoretical. To model categorization is to model power.

Introduction

The word "stereotype" carries very different meanings depending on context. In software engineering, it often denotes a structural role, a formal extension, or a reusable pattern. In psychology and sociology, however, it refers to a deeply rooted cognitive mechanism that shapes perception, judgment, and behavior.

This paper explores both domains. We begin with concrete examples of stereotypes in computer science. We then examine the psychological and sociological understanding of stereotypes, grounded in scientific research. Finally, we compare both perspectives and highlight what computational models fail to capture about the human phenomenon.

Stereotypes in Computer Science

Before turning to society, consider how the word "stereotype" functions in software engineering.

In technical contexts, a stereotype is simply a label that clarifies a role within a system.

1. Architectural Roles

When engineers design a complex application, they often distinguish between different kinds of components. One part of the system stores data. Another processes requests. Another coordinates interactions.

Instead of redefining each component from scratch, designers attach a label that signals its purpose. A component responsible for storing customer information might be labeled as a "data entity." Another that handles incoming requests might be labeled a "controller." These labels do not change what the component is made of. They simply clarify how it is meant to function.

The stereotype here acts as an architectural shorthand. It tells developers: this piece plays a specific role in the larger structure.

2. Framework Conventions

Modern software frameworks go further. They allow developers to declare that a given module is, for example, a "service" (it performs business logic) or a "repository" (it retrieves information from storage).

Once labeled, the system automatically treats the module accordingly. It might be wired into other parts of the system, granted special lifecycle management, or monitored in specific ways.

Again, the stereotype is explicit and binary. A module either has that role or it does not.

3. Design Patterns as Behavioral Stereotypes

Engineers also rely on recurring design solutions known as patterns. A "factory" pattern, for instance, centralizes object creation. A "strategy" pattern allows behavior to be swapped dynamically. A "singleton" ensures that only one instance of a component exists.

These are not physical structures but recognizable roles. When someone says, "This part acts as a factory," experienced engineers immediately understand the expectations attached to that role.

Here, stereotype means: a typical structural or behavioral role that appears repeatedly in well-designed systems.

4. Metadata and Domain-Specific Roles

In more advanced systems, developers can attach descriptive metadata to components: this endpoint handles HTTP requests; this function is publicly accessible; this data object must be persisted.

The label activates predefined rules. It is a programmable role.

5. Knowledge Representation Systems

Even in artificial intelligence, early knowledge-based systems used predefined categories such as "Doctor," "Teacher," or "Vehicle," each associated with typical properties.

If something was classified as a "Bird," the system assumed it had wings and could usually fly, unless specified otherwise.

This comes closest to human stereotyping, because it involves default expectations. Yet even here, the structure remains formally defined and logically bounded.

Across all these examples, the meaning of stereotype in computing is consistent:

It is an explicit, declared role that extends meaning within a controlled architecture.

It does not emerge spontaneously. It carries no emotional charge. It does not resist correction. It does not defend itself.

It is a tool of clarity.

Stereotypes in Psychology and Sociology

1. Origin of the Concept

The term was introduced into social science by Walter Lippmann in Public Opinion (1922). He described stereotypes as "pictures in our heads" — simplified mental representations that mediate between individuals and complex social reality (Lippmann, 1922).

Lippmann did not treat stereotypes merely as prejudice, but as a necessary cognitive simplification process.

2. Cognitive Mechanism

Human stereotypes arise from categorization — a core cognitive process.

Eleanor Rosch’s work on prototype theory (Rosch, 1975; Rosch & Mervis, 1975) demonstrated that categories are organized around graded typicality rather than strict logical boundaries. This laid the foundation for understanding stereotypes as probabilistic category extensions.

Daniel Kahneman and Amos Tversky (Tversky & Kahneman, 1974; Kahneman, 2011) showed that human judgment relies on heuristics that systematically deviate from statistical rationality. The representativeness heuristic in particular explains how individuals infer group membership based on resemblance rather than base-rate probabilities.

Patricia Devine (1989) demonstrated that stereotype activation can be automatic and independent from personal endorsement, establishing the dual-process distinction between automatic activation and controlled correction.

Key characteristics:

- Probabilistic inference

- Heuristic processing

- Rapid automatic activation

- Limited cognitive cost

This process is largely unconscious and unavoidable.

3. Social Identity and Group Dynamics

Henri Tajfel’s minimal group experiments (Tajfel et al., 1971) demonstrated that mere categorization into arbitrary groups is sufficient to produce in-group favoritism. This led to Social Identity Theory (Tajfel & Turner, 1979), which explains how group membership becomes central to self-concept and intergroup bias.

John Turner’s Self-Categorization Theory (Turner et al., 1987) further clarified how context determines which social identity becomes salient, dynamically modulating stereotype activation.

Stereotypes are therefore embedded in identity structures:

- In-group vs out-group

- Status hierarchies

- Power asymmetries

They are socially situated, not merely cognitive shortcuts.

4. Bias and Resistance to Revision

Human stereotypes are resilient. They persist even in the presence of counterevidence.

Lord, Ross, and Lepper (1979) demonstrated biased assimilation: individuals interpret mixed evidence as supporting their prior beliefs.

Hamilton and Gifford (1976) showed illusory correlation — the tendency to overestimate associations between minority groups and distinctive behaviors.

Subtyping mechanisms (Weber & Crocker, 1983) explain how disconfirming individuals are classified as exceptions rather than triggering category revision.

Unlike software stereotypes, human stereotypes exhibit motivated resistance to change.

5. Affective Dimension

Stereotypes are rarely emotionally neutral.

They are often linked to:

- Fear

- Admiration

- Contempt

- Envy

The affective dimension is not secondary to cognition; it is intertwined with it. Research on implicit evaluation (Fazio et al., 1986) shows that emotional responses to social categories can be triggered automatically, before conscious reflection. Categorization and evaluation occur together.

The Stereotype Content Model (Fiske, Cuddy, Glick, & Xu, 2002) suggests that groups are commonly perceived along two dimensions: warmth and competence. These dimensions map directly onto emotional reactions. Groups seen as warm but incompetent may elicit pity; competent but cold groups may elicit envy; groups low on both may elicit contempt. Stereotypes therefore position groups within a moral and relational landscape.

Neuroscientific evidence (Harris & Fiske, 2006) further indicates that extreme negative stereotyping can alter basic social perception, affecting neural responses associated with social cognition.

Emotion also strengthens persistence: affectively congruent information is more easily remembered, reinforcing the stereotype over time. Importantly, this affective response can operate independently of explicit belief. Individuals may consciously reject a stereotype while still experiencing automatic emotional reactions to category cues.

Stereotypes are thus not cold summaries of traits. They are affectively charged schemas that orient attention, bias interpretation, and prepare behavior. They help determine not only what we think about others, but how we feel toward them — and how we are prepared to behave.

6. Stereotype Threat

Claude Steele and Joshua Aronson (1995) demonstrated that awareness of a negative stereotype about one’s group can significantly impair performance on cognitively demanding tasks. This phenomenon, termed "stereotype threat," shows that stereotypes can influence outcomes independent of ability.

Subsequent work (Steele, 2010) emphasized the broader identity-based anxiety mechanisms involved.

This illustrates the performative dimension of stereotypes: they can shape the very outcomes that appear to confirm them.

Comparison and Structural Differences

The contrast between computational stereotypes and human stereotypes is not superficial. It is structural. While both involve categorization and role assignment, they differ fundamentally in mechanism, dynamics, and consequences.

1. Deterministic Labels vs Probabilistic Inference

In software systems, stereotypes are deterministic. A class annotated as @Service is a service. A UML element marked <<entity>> is an entity. There is no gradient, no uncertainty, no probability distribution.

Human stereotypes, by contrast, operate as probabilistic expectations. They implicitly encode statistical generalizations under uncertainty.

Fiske and Neuberg’s continuum model (1990) describes how impression formation moves from category-based processing toward individuated processing depending on motivation and information availability.

The Stereotype Content Model (Fiske, Cuddy, Glick, & Xu, 2002) further demonstrates that stereotypes are structured along two probabilistic dimensions: warmth and competence.

What is missing in computational models is graded belief, confidence level, uncertainty propagation, and multidimensional evaluative structure.

2. Explicit Declaration vs Automatic Activation

In computer science, stereotypes are intentionally declared. They are introduced by design. They do not activate unless specified.

Human stereotypes activate automatically upon exposure to a cue (name, accent, visual marker, category label). This activation is rapid and largely unconscious. It occurs even when individuals consciously reject the content of the stereotype.

Computational systems lack this spontaneous activation mechanism. They require explicit instruction.

3. Structural Neutrality vs Affective Charge

Software stereotypes are semantically neutral. They describe architecture or role.

Human stereotypes are rarely neutral. They are linked to affective valence (positive, negative, ambivalent). Emotional activation influences attention, memory encoding, and behavioral readiness. Fear, admiration, contempt, envy, or threat can be attached to stereotyped categories.

No conventional programming model integrates emotional valence as an intrinsic component of categorization.

4. Immediate Revision vs Cognitive Inertia

If a software stereotype is incorrect, it can be modified instantly. There is no resistance.

Human stereotypes show inertia. Even when confronted with disconfirming evidence, individuals may:

- reinterpret the evidence,

- treat the case as an exception,

- selectively remember confirming instances.

This resistance is supported by confirmation bias and motivated reasoning. The stereotype defends its own coherence.

Computational stereotypes lack self-protective bias.

5. Static Assignment vs Contextual Modulation

In software, stereotypes are static unless manually changed.

In humans, stereotype reliance increases under cognitive load, time pressure, or stress (Gilbert & Hixon, 1991). Dual-process models (Chaiken & Trope, 1999; Kahneman, 2011) describe how reduced cognitive resources shift processing toward automatic categorization.

Executive control mechanisms located in prefrontal cortical systems (Amodio, 2008) can inhibit stereotype-consistent responses, but this inhibition requires cognitive resources.

Thus, stereotype expression depends on contextual modulation and cognitive control capacity. No such modulation layer exists in conventional software stereotypes.

6. No Identity Layer vs Identity-Linked Mechanism

Software systems do not possess identity. A class does not belong to an in-group or out-group.

Human stereotypes are embedded in social identity structures. Group membership affects self-concept, status perception, and power dynamics. Categorization alone (as shown in minimal group experiments) can produce preferential treatment for one’s own group.

This identity coupling gives stereotypes social force. It connects them to hierarchy and dominance.

7. No Competition vs Competing Cognitive Frames

In code, multiple stereotypes can coexist without conflict unless explicitly programmed.

In the human mind, multiple stereotypes may activate simultaneously and compete. Context determines which frame dominates. The mind arbitrates between competing categorizations, often unconsciously.

This dynamic competition is absent from formal stereotype models in software.

8. No Performative Loop vs Self-Fulfilling Dynamics

Perhaps the most profound difference concerns feedback.

Software stereotypes describe structure; they do not reshape reality beyond program behavior.

In social psychology, self-fulfilling prophecy effects (Merton, 1948) and expectancy effects (Rosenthal & Jacobson, 1968) demonstrate how beliefs about groups can alter treatment, which in turn influences outcomes.

Stereotype threat (Steele & Aronson, 1995) is one such mechanism. Belief influences performance, performance appears to validate belief.

This recursive socio-behavioral feedback loop has no equivalent in conventional computational stereotype systems.

9. Compression Without Variance Modeling

Human stereotypes compress intra-group variability. They apply a group-level average to individuals, ignoring distribution spread and variance.

Software stereotypes do not approximate distributions. They assign categorical membership precisely. They do not collapse statistical dispersion into identity labels.

Taken together, these differences show that computing has implemented the structural shell of stereotyping — role assignment and semantic extension — but not its cognitive engine.

Human stereotyping is:

- probabilistic,

- automatic,

- affectively charged,

- identity-linked,

- context-modulated,

- resistant to revision,

- and capable of reshaping social reality through feedback loops.

Software stereotypes are:

- deterministic,

- explicit,

- neutral,

- static,

- and fully revisable.

The gap is not merely semantic. It is architectural.

Conclusion

Software engineering uses stereotypes as formalized roles that structure systems. They are precise, controllable, and transparent. They extend semantics without ambiguity. They exist because an architect decided they should.

Human stereotypes, by contrast, are not designed. They emerge. They are adaptive compressions of reality shaped by evolution, culture, power, and historical contingency. They operate automatically, probabilistically, and emotionally. They resist correction, compete for dominance, and can reshape social reality through recursive feedback loops.

The philosophical tension lies here: computing has mastered explicit categorization, but human cognition is governed by implicit categorization.

In software, categories are tools. In the human mind, categories are forces.

A UML stereotype does not defend itself. A human stereotype does. A software annotation does not feel threatened. A human identity does. A design pattern does not alter the world that names it. A social stereotype can alter the world it describes.

What computing models is classification as architecture. What psychology reveals is classification as power.

This distinction matters. The moment we attempt to simulate cognition, recommendation systems, or social reasoning in machines, we move from neutral role assignment toward systems that may inherit probabilistic bias, feedback amplification, and identity-sensitive categorization.

The danger is not that machines use stereotypes. Machines already do, in the form of statistical generalization. The danger is that we mistake structural labeling for understanding cognitive dynamics.

If we ever build computational systems that integrate uncertainty, graded belief, contextual modulation, competitive activation, affective weighting, and recursive feedback, we will no longer be implementing stereotypes as metadata. We will be implementing something closer to human judgment — with all its efficiency, and all its risk.

The deeper question, then, is not whether computing can model stereotypes. It is whether we fully understand the mechanism we would be modeling.

To formalize stereotypes is easy. To formalize their consequences is not.

Alexandre Vialle

When Cognitive Prototype Theory Echoed in Programming Language Design

In the mid-1970s, Eleanor Rosch demonstrated that human categories are not defined by rigid boundaries but organized around prototypes — more typical examples that anchor conceptual space. A robin is a more central “bird” than a penguin. Category membership is graded, structured by resemblance and centrality rather than strict essence.

Roughly a decade later, in a very different field, the programming language Self (Ungar & Smith, 1986–87) challenged class-based inheritance in software design. Instead of defining objects through abstract classes, Self used concrete prototypes. New objects were created by cloning and modifying existing exemplars.

The two developments were historically independent. Yet philosophically, they resonate.

Both moved away from essentialist hierarchies toward exemplar-based organization. Both privileged concrete instances over abstract taxonomies. Both suggested that structure can emerge from variation around a center rather than from rigid top-down definition.

In cognitive science, this shift reframed how we understand categorization in the mind. In programming language design, it reframed how we organize structure in code.

The echo is subtle but striking: when we changed how we thought about categories, we also began changing how we built them.